Stanford Study Questions Multi-Agent AI Cost Benefits; Anthropic Faces Service Pressure

New Stanford research suggests single-agent AI systems often outperform multi-agent setups when compute budgets are equal, while Anthropic explores service rationing options.

Stanford University researchers have published findings suggesting that single-agent artificial intelligence systems often match or outperform multi-agent architectures on complex reasoning tasks when both are given equal computational budgets. The study challenges the growing enterprise trend toward multi-agent AI frameworks, which use multiple models working together to solve problems.

The research, conducted by Dat Tran and Douwe Kiela, focused on multi-hop reasoning tasks that require connecting disparate pieces of information. They implemented strict "thinking token" budgets to ensure fair comparisons between single and multi-agent systems. Under these controlled conditions, single-agent systems typically delivered higher accuracy while consuming fewer computational resources.

According to the researchers, multi-agent systems suffer from inherent communication bottlenecks where information can be lost during handoffs between agents. They coined the term "swarm tax" to describe the computational overhead enterprises may be paying for multi-agent architectures without corresponding performance benefits. The study found that single agents maintain access to richer task representations within continuous contexts, making them more information-efficient.

However, the researchers identified scenarios where multi-agent systems prove superior, particularly when handling degraded contexts such as noisy data or corrupted information. In these cases, the structured filtering and verification capabilities of multi-agent systems can more reliably extract relevant information than single agents.

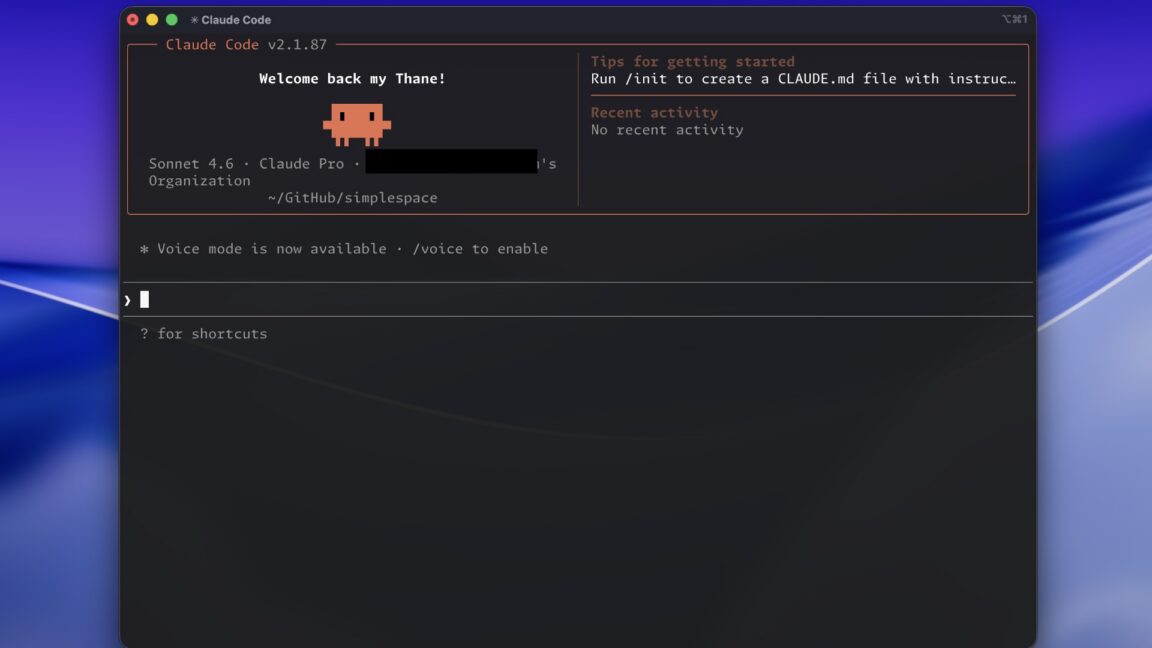

Meanwhile, AI company Anthropic has been exploring adjustments to its service offerings due to high demand. The company reportedly tested removing Claude Code from its Pro plan as it examines new approaches to rationing its AI services. Additionally, while several federal agencies have gained access to Anthropic's new cybersecurity model Mythos Preview, the Cybersecurity and Infrastructure Security Agency reportedly has not yet received access to the tool.

The Stanford findings suggest enterprises should reserve multi-agent systems for specific use cases where single agents hit performance ceilings, rather than treating multiple agents as automatically superior. As AI models continue improving their internal reasoning capabilities, the researchers expect multi-agent frameworks to evolve into targeted engineering solutions rather than default architectures.